CRIM’s computer vision team is called upon to solve all kinds of image or video analysis problems related to fields as varied as industrial inspection, dermatology, microscopic imaging, 3D imaging , etc.

Recently, we have been involved in the problem of automated photo-identification of animals, which plays a crucial role in the understanding of ecosystems. This problem is increasingly studied, in part due to the increased use of camera traps that capture high volumes of images of wild animals crossing their field of view. These volumes are simply too significant to be processed manually by researchers. Research platforms such as wildme.org, an important tool promoting citizen science, also require image analysis algorithms to allow their users to collect data about the evolution of populations of multiple species.

Context

The Mingan Islands Cetacean Study (MICS) research organization contacted the CRIM team to offer to use its images of blue whales for the purpose of automating the photo-identification process. Whale photo-identification is a demanding activity that requires time and advanced expertise: the search for an association between a photo and a known individual can take several tens of minutes when the catalog of individuals has hundreds of entries. To be convinced of this, it suffices to observe the figure below containing an unknown whale:

That same whale can also be found in one of the three following images, can you tell which one and thus pass the photo-identification test? 😁

A)

B)

C)

The photo that matches the unknown whale is in Figure C (taken three years earlier). With no surprise, the idea is to check whether the mottling between two individuals is the same [1]

Naturally, developing an automated photo-id method could greatly assist biologists in finding corresponding pairs of photos, for instance by narrowing down the list of potential candidates. Over the last 40 years, MICS has collected thousands of images of cetaceans with rich metadata. Can all this data be exploited by AI/vision techniques to assist researchers in their photo-id activities? And which techniques are the most promising?

Automated Photo-id: Strategy and Solutions

The literature review on the automated photo-identification of blue whales has returned limited results: this problem has been barely studied in the scientific literature. One might consider using as a starting point some algorithms developed initially for photo-identifying another whale species; the problem is that the photo-id process can be very different from one species to another, e.g., in some cases the fluke is distinctive, in some other cases, the head or dorsal fin is the anatomical element to consider, etc.

In the case of the blue whale, skin pigmentation patterns allow photo-identification, as we saw earlier with the short quiz, and the best algorithmic clue points towards a family of techniques developed for the extraction and comparison of local features in the image. Local features are signatures attached to precise locations in an image (e.g., belonging to an object) which possess invariance properties, i.e. the signatures have roughly the same numerical values even when the appearance of the object changes due to a different viewpoint or type of illumination.

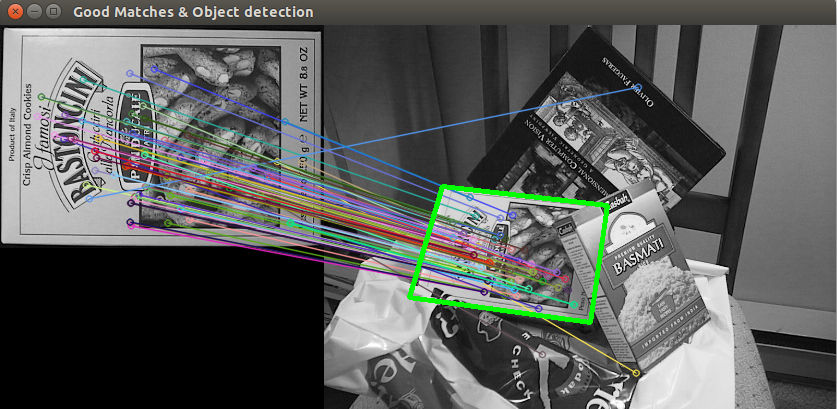

The next figure illustrates the idea, where visually similar points can be connected automatically because their signatures are similar, even though the appearance of the object is different (rotation, tilt, smaller size):

In this context, like the cookie box above, two images of the same whale will share many points of correspondence: the higher the number of correspondences, the higher the chance that these images come from the same individual.

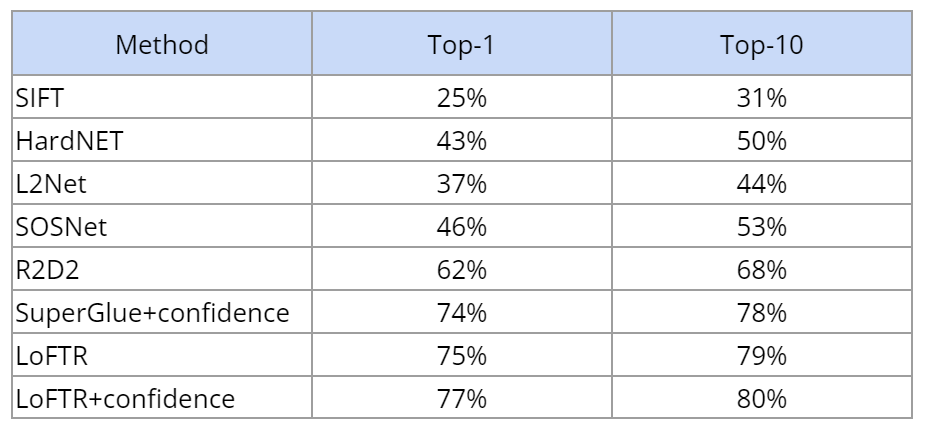

We have evaluated several algorithms applied to the extraction and comparison of local features on a large dataset (807 individuals, 3129 images in total). They can be classified as follows:

- Classical: signatures are computed using procedures hand-crafted by vision researchers, and they are compared with the Euclidean distance (SIFT is a good example);

- Based on neural networks trained to generate a signature for each point;

- End-to-end type of neural network architectures that take as input a pair of images and output a list of correspondences.

The algorithms are designed to find correspondences between an image of a blue whale (with known name or identity) and images from the complete dataset, and then produce a list of ten candidate names for that image. Results are given in the table below, where the “top-10” criterion reflects the proportion of images whose associated whale name appears in the list, and “top-1” is the proportion of images with perfect identification (the hypothesis for the most likely name found by the algorithm is indeed the correct one).

Two points should be highlighted:

- Performance variability between methods is high, which is probably a good indication that this problem of identifying blue whales from photographs is difficult.

- Best results are obtained using end-to-end methods like LoFTR or Superglue. The other approaches break the problem in three steps (search for interest points in each image, computation of the signature by a neural network, search for similar signatures between two images), and clearly this approach turns out to be less efficient.

Note also that end-to-end methods also provide a degree of confidence for each correspondence: the use of this information allows to obtain a slightly better performance than the simple use of the correspondence count.

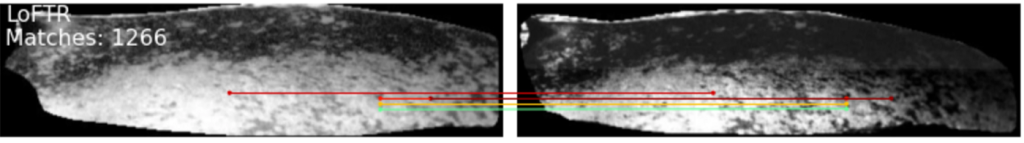

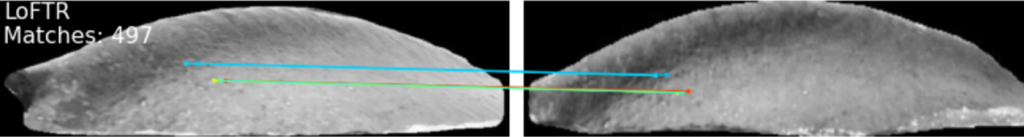

This last method, LoFTR [2], gives impressive results in terms of its ability to find correspondences. Close examination (below) clearly indicates that the points connected by the colored lines correspond to similar areas, so the pair of images are from the same whale:

For more details, interested readers can also consult a publication [3] presented at “Computer Vision for Analysis of Underwater Imagery (CVAUI) 2022” conference last August. The master class held on September 23rd (available below; in French) provides additional details about the proposed approach to solve the blue whale photo-identification problem.

Conclusion

Thanks to MICS’s rich database, CRIM’s experts have been able to develop blue whale image matching algorithms that are promising despite the difficulty of the task. But beyond the photo-identification of whales, there are many problems with comparing images and detecting objects in images that can benefit from the powerful computer vision tools mentioned in this post.

References

[1] R. Sears et al. “Photographic identification of the blue whale (Balaenoptera musculus) in the Gulf of St. Lawrence, Canada.”

[2] J. Sun et al. “LoFTR: Detector-Free Local Feature Matching with Transformers”.

[3] M. Lalonde et al. “Automated blue whale photo-identification using local feature matching”.